Knowledge HUB

Beacuse we belive that sharing the knowledge is the best way to innovate

Digital Audio: Digitization

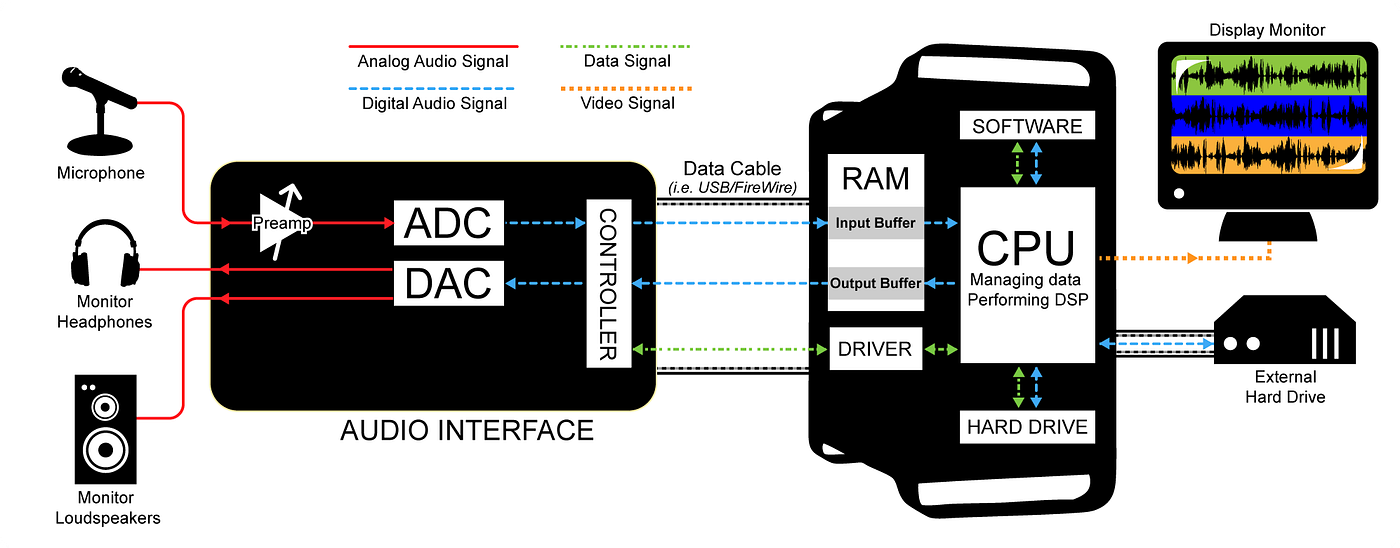

After reviewing the audio history and how sound is created, let’s start diving into digital system processing by first of all understanding how analog audio becomes digital from an engineering perspective. What path sound takes to be digitized and playbacked again?

Overview

Analog audio consists of continuous sound waves, which must be converted into discrete digital data for storage, processing, transferring to the network or cloud, and playback on modern devices. Such a scheme can be seen on the following drawing:

Process of conversion of the electrical to a digital signal known as analog-to-digital conversion (ADC) and involves three key steps:

- Sampling

- Quantization

- Encoding

But before the sampling, we need to be sure that the signal is suitable for the process. To do it we will use a Transduction and Antialiasing filter. Let’s see how it works.

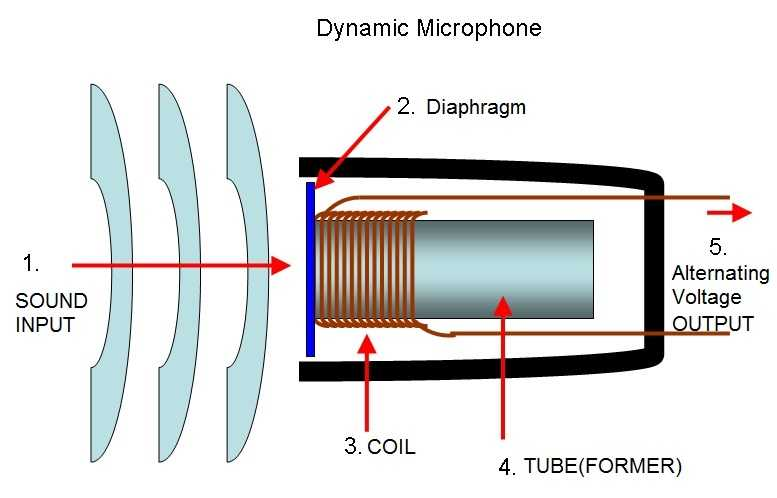

Transduction

Analog sound waves are first captured using a transducer, such as a microphone or pickup.

The transducer converts the sound waves into an electrical analog signal, which is a continuous waveform representing the amplitude and frequency of the sound.

Mic level signals are typically in the range of millivolts (mV), often between 1 mV and 100 mV, depending on the microphone’s sensitivity and the loudness of the sound source. This is significantly weaker than other audio signal levels like line level 1.23V RMS in the studio.

Signal amplitude can be represented in peak level Vp or average value, RMS. Average voltage will be Vrms = 0.707 x Vp for sine waves.

Anti-Aliasing Filtering

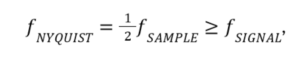

Signals can be injected with different frequencies. To capture it we will use a sampler with defined sampling rate. By Nyquist theorem, to capture a signal we need to capture it with higher frequency, at least twice of the signal frequency. In case when they are equal, the phase should be differ.

Nyquist — Shannon Theorem

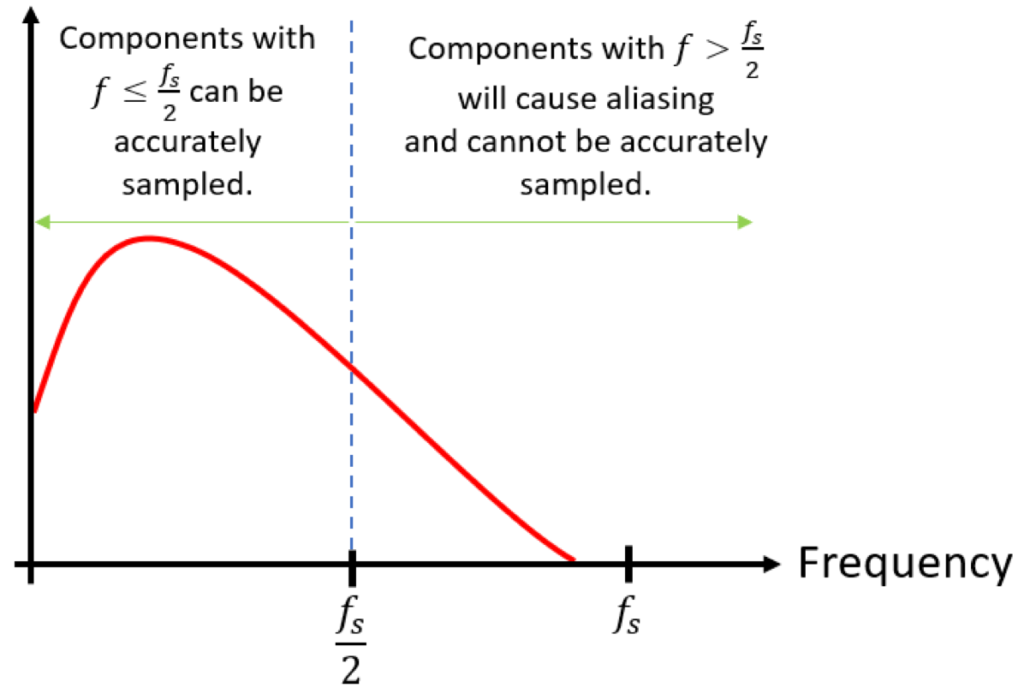

Frequencies above half the sampling rate (the Nyquist frequency) cause aliasing, where high frequency components are “folded back” into lower frequencies, creating unwanted artifacts in the sampled signal.

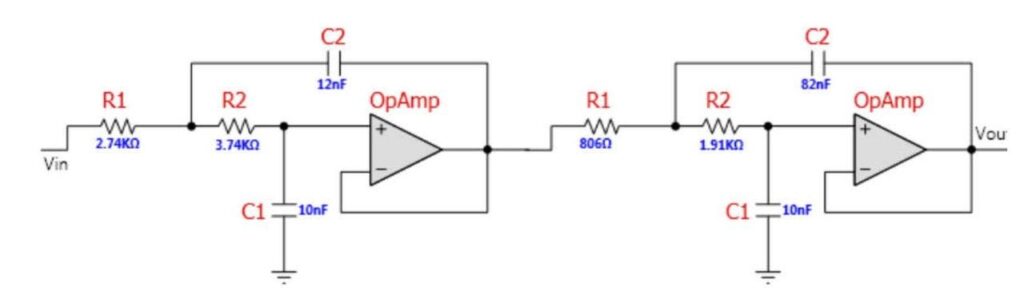

So before sampling, the analog signal is passed through a low-pass filter to remove those frequencies.

This prevents aliasing, which can distort the digital representation. It can be implemented by analog Anti-Aliasing filters.

Now we can continue with the sampling.

Sampling

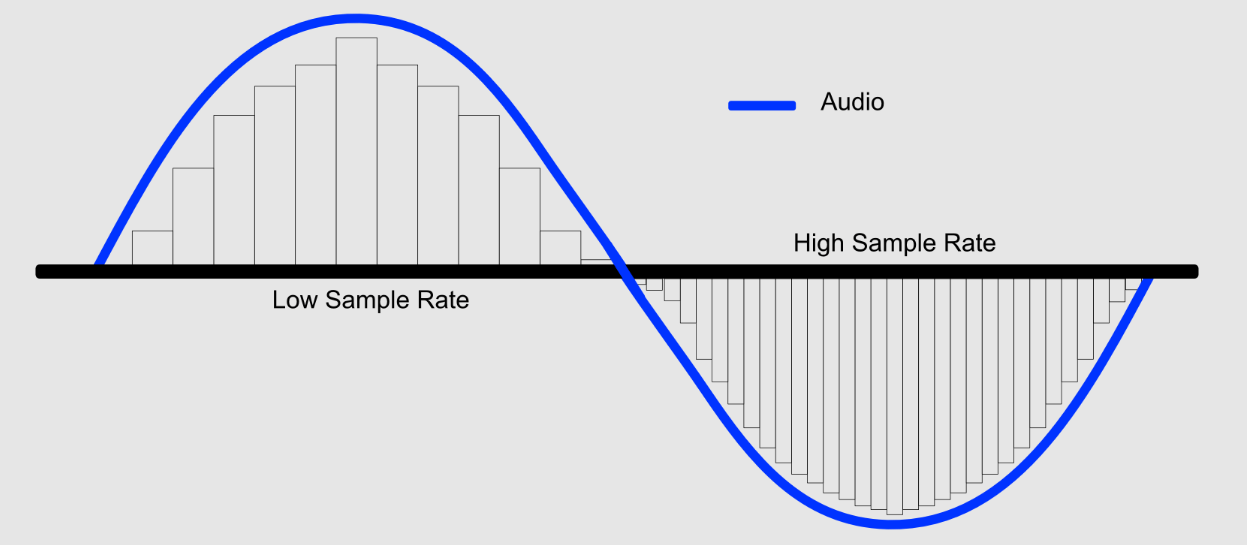

The analog signal is sampled at regular intervals to measure its amplitude at specific points in time.

The sampling rate

The sampling rate determines how many samples are taken per second. As we mentioned previously, according to the Nyquist-Shannon theorem, the sampling rate must be at least twice the highest frequency in the signal to accurately reproduce it.

Common sample rates are 44.1 kHz, 48 kHz, 96 kHz and 192 kHz.

44.1 kHz

This rate is used for CD-quality audio, streaming platforms like Spotify, mp3.

48 kHz

Primary Use: Professional audio and video production.

96 kHz

Studio Recording: Used for professional-grade and home recording where additional detail is required, especially in mixing and mastering stages. Audiophile Listening: Favored by audiophiles for its ability to encode frequencies beyond human hearing, offering cleaner signal processing

192 kHz

Primary Use: Ultra-high-resolution audio and specialized applications like archival recording. Used in studios for preserving master recordings with maximum fidelity. Scientific Research: Utilized in applications requiring analysis of ultrasonic frequencies.

Sample & Hold

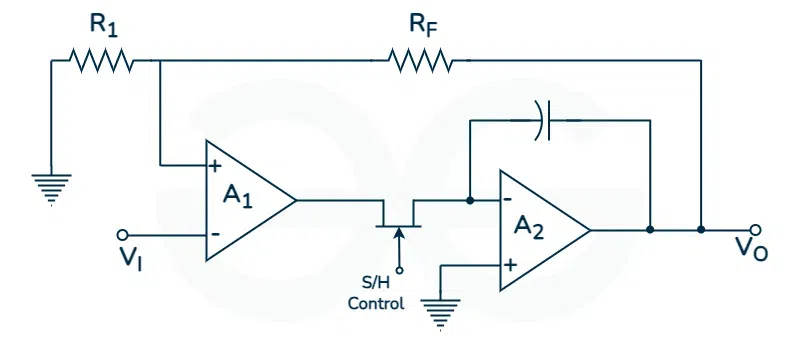

In many applications, including those using Successive Approximation Register (SAR) Analog-to-Digital Converters (ADC), a sample and hold (S/H) circuit is often used before the ADC to ensure that the analog input voltage remains stable during the conversion process. This is crucial because the input signal may change during the time it takes for the ADC to perform the conversion, leading to inaccuracies. The S/H circuit “samples” the input voltage and then “holds” it constant for the ADC.

Sample and Hold filter

The sample-and-hold circuit is required to keep the voltage entering the ADC constantly while the conversion is taking place, then comes the next stage of the signal digitization, quantization.

While SAR ADCs have their place, other technologies are frequently employed in audio analog conversion, each with its own strengths:

Delta-Sigma ADCs (ΔΣ ADCs):

These are very common in high-quality audio applications. They excel at achieving high resolution (24-bit and beyond) and a high signal-to-noise ratio, which is crucial for capturing the nuances of audio signals. Delta-sigma converters are used in:

- Professional audio recording equipment

- High-end audio interfaces

- CD players and other high-fidelity playback devices

- Pipelined ADCs: While less common in typical audio recording, pipelined ADCs can be used in applications where very high bandwidth is required, such as in some professional audio applications and audio testing equipment.

The choice of ADC technology in audio applications depends on factors such as:

- Desired audio quality: Higher resolution and SNR are needed for professional recording than for casual listening.

- Cost constraints: Delta-sigma ADCs can be more expensive than SAR ADCs.

- Power consumption: Portable audio devices may favor lower-power ADCs.

- Speed requirements: Some audio applications require very high sampling rates.

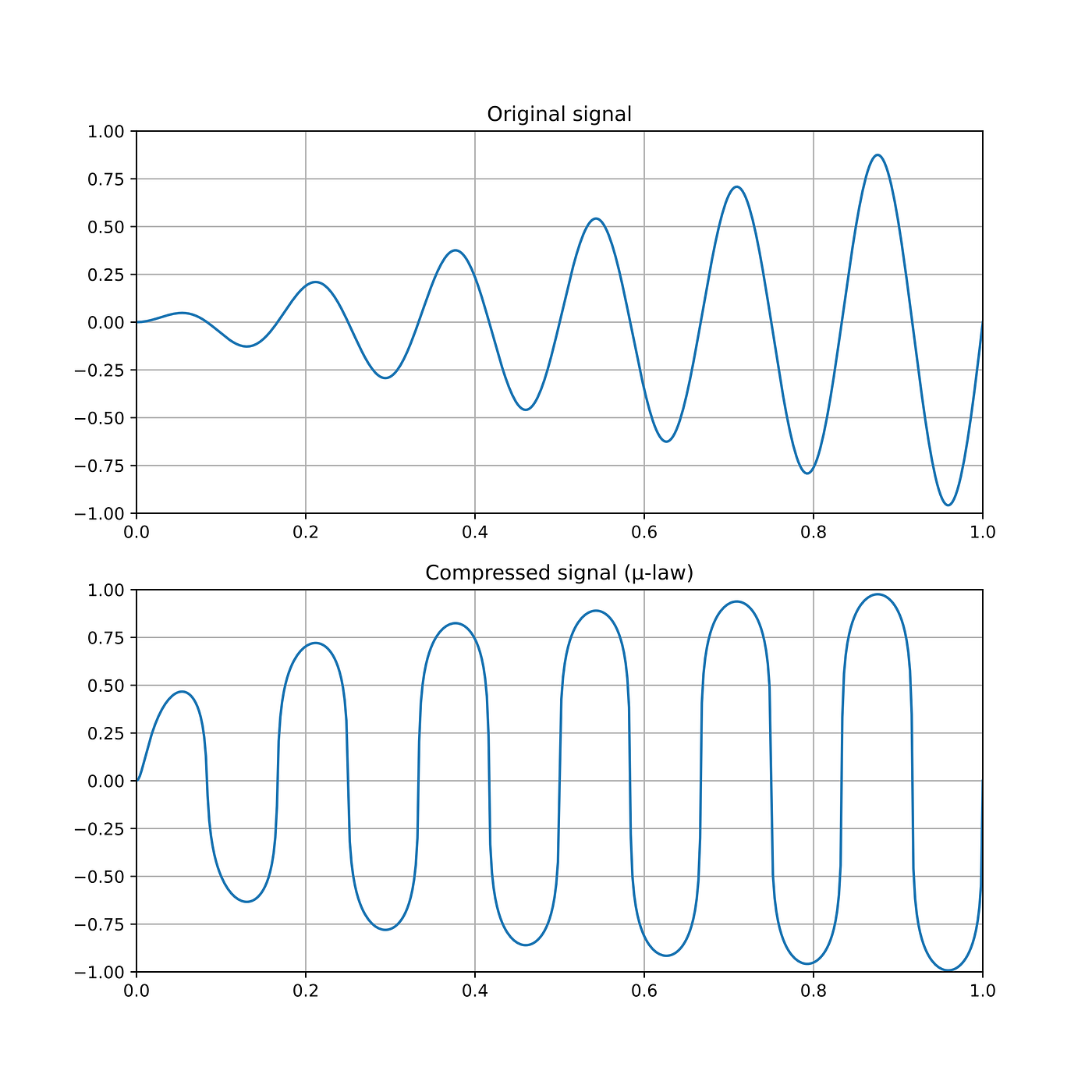

Companders

An abbreviation of compressor-expander. A double-ended process for noise reduction or to increase apparent dynamic range or headroom in recording, transmission and signal processing systems. The process uses a fixed rate compressor at the start and a complementary expander at the end. Primarily companding is used in specialized applications such as wireless microphones, telephony systems and analog-to-digital conversion in environments with limited dynamic range.

Companding involves compressing the dynamic range of an audio signal before quantization and expanding it afterward, which helps reduce quantization noise and improve signal-to-noise ratio (SNR) for signals with a large dynamic range.

In the next newsletter I will cover Quantization.

Related articles